Kai Siedenburg investigates how people with hearing problems can fully experience the joy of music again. Martin Bleichner is more interested in disturbing noises in everyday life. The two researchers nonetheless want to work together.

When Dr Martin Bleichner gives a talk he often holds a pen in his hand. Not to point at things or to take notes, but to make little “clicks” by pressing the end every now and then. “After a few minutes, when I eventually put the pen down, the reaction is almost always the same: half of the audience is visibly relieved; the other half will either have barely noticed the clicking or have chosen to ignore it,” the neuropsychologist explains.

Since 2019 he has headed the Emmy Noether Group “Neurophysiology of Everyday Life” at the Department of Psychology, which studies how we perceive sounds in everyday life – in particular unwanted sounds, generally referred to as noise. “Many find it hard to believe that different people perceive the same sensory impressions in en-tirely different ways,” says Bleichner.

One example of this is synaesthesia, a phenomenon that produces non-separated sensory perceptions. Synaes-thetes, as people with synaesthesia are called, might perceive letters or sounds as inherently coloured, or perceive touch simultaneously with sound or taste sensations. Bleichner’s research concentrates on auditory stimuli and his aim is to create a visual rendition of the effects they trigger in different people’s brains.

Music sounds completely different with hearing impairments

Were Bleichner to stand in front of an older audience, the number of people who feel irritated by the clicking would be lower. This is something his colleague Dr Kai Siedenburg knows from his own research. Some of them would not hear the clicking at all, even if they tried. After all, one in two people over the age of 70 have some form of hearing impairment.

But although this might come in handy with undesirable sounds, it can be especially annoying for music lovers. Concerts, recorded music and radio all sound very different to people with hearing problems. But there are plenty of answers to how they sound different. “The experience of listening to music with hearing impairments varies dramatically because types of hearing loss themselves vary so dramatically,” Siedenburg explains.

He has been conducting research and teaching at the University of Oldenburg since 2016, and was awarded a Freigeist fellowship from the Volkswagen Foundation in 2019 to research music perception and processing. His “Music Perception and Processing Lab” is based at the Department of Medical Physics and Acoustics.

Hearing researchers with supposedly fundamentally different interests

Both scientists are hearing researchers, although at first glance their key interests couldn’t be more different. While Bleichner’s main focus is unwanted noise and individual noise perception, Siedenburg pursues beautiful sounds – and ways to help people suffering from hearing problems enjoy them again. But perhaps the search for sounds perceived as noise is not so different from the search for the perfect sound after all?

“People with hearing problems often complain that music sounds faded to them, that they are no longer able to pick out individual instruments and that the sound quality in general is unpleasant,” Siedenburg says. Standard hearing aids seldom improve the situation, mainly because they tend to be optimized for helping people to follow conversations.

“Hearing aids concentrate on the volume levels in normal speech,” Siedenburg explains. Music, on the other hand, especially in concerts, can be both very loud and very quiet and is thus outside this range – just one of the problems that hearing aids have yet to successfully address. Many functions in hearing aids today that help their wearers to understand speech actually make it more difficult to hear music.

Transmit music in such a way that it gives pleasure again

This is something Siedenburg wants to change with his psychoacoustics research. This discipline examines the connection between the physical auditory stimulus and its auditory perception. Siedenburg’s aim is to better understand these connections and find ways to transmit music so that, when technologically tweaked to the individual’s needs, even the hard of hearing can enjoy listening to it again.

His central question here is: How to mix a musical signal – whether played live or from a recording – so that music fans with hearing aids can hear every-thing the composition has to offer?

To answer this question Siedenburg and his team, which includes three PhD students, want to pinpoint the critical factors in listening to music and find out which of these change with hearing loss. One experiment they conduct involves playing a short sequence of music featuring several instruments played simultaneously to younger people with normal hearing and older people with moderate hearing loss. The test persons then listen to two shorter sequences featuring a melody or a single instrument and are asked to identify those which they already heard in the first musical mixture.

Listening tasks are easier for music enthusiasts

Siedenburg found that sequences had to be played at a much higher volume for the older participants to be able to perceive them at all, let alone match them with the musical sounds they had previously heard. This was not the case for the younger test subjects. Another interesting finding: How well a person performs in the experiment depends not only on their hearing ability, but also on their musical training. Anyone who has been deeply involved with music, making music themselves for example, will find the hearing exercise easier than someone in the same age group with no such experience.

To get a sense of which sequences in a piece of music are important enough to merit adapting their volume for hearing-impaired listeners, Siedenburg and his PhD student Michael Bürgel studied the levels of interest that the various instruments in pop songs provoked in listeners. They found that participants in the experiment were less likely to remember the bass track than the vocal track, which they almost always remembered.

The researchers were particularly surprised to observe that – compared with all other instruments in the experiment – it made little difference whether the test persons were told in advance to pay special attention to the voice, or whether they were only asked afterwards if they had perceived it: almost all of them remembered the vocal track correctly. These results show that when it comes to pop music, listeners pay much more attention to the human voice than anything else.

Human voice in music is concise

One reason for this bias could be that pop music as a genre often focuses on the singing voice, so this could mean that listeners have learned to concentrate more on that, Siedenburg explains. But this high level of attention could also result from imperfections in the human voice. “No matter how well and precisely someone sings: singing is never perfect,” Siedenburg notes.

When small imprecisions become major mistakes – like when the child next door is practising the violin for the first time and manages to elicit the most piercing screeching sounds from the instrument – Bleichner’s interest as a neuropsychologist is piqued. The point at which a person experiences a sound as unpleasant differs from individual to individual. And the same person may also experience the same sound in very different ways. “Whether or not I am bothered by the sound of a motorbike depends on whether or not I am sitting on it,” Bleichner adds to prove his point.

He uses an electroencephalogram (EEG) to look into people’s heads and examine the effects that everyday sounds have on the brain. “A lot of what we know about how the brain functions has been learned by studying test persons for an hour or two in the lab,” he says. They have to sit as still as possible so that the researchers can record their brainwaves without disturbances caused by movement. External influences are eliminated as much as possible.

“This allows us to get a good look at the brain but has very little to do with daily life,” the researcher explains. Which is why he takes another path and measures the brainwaves of his test persons in everyday situations – working, eating or going for a walk. His team includes one postdoctoral researcher and five doctoral candidates.

What do people hear when you do not tell them what to focus on in advance?

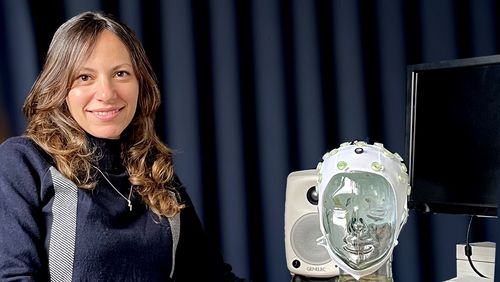

Over the past few years he has been developing and continually improving a method for making these measurements, in collaboration with neuro-psychologist Prof. Dr Stefan Debener, a fellow researcher in the Psychology Department. Their cEEGrid is a C-shaped piece of adhesive sticker that is placed around the outer ear outer ear with the help of a small amount of sensor gels.”

Unlike the clunky, traditional EEG caps, this one is almost invisible. Ten tiny electrodes attached to this ultra-thin piece of plastic measure brainwaves and the data is then transferred into a small amplifier, which in turn sends it to a smartphone for recording. “So you could even wear it to a family gathering without raising eyebrows,” Bleichner says.

In his experiments the researcher looks for certain waveforms in the EEG. These “event-related potentials”, or ERPs, are triggered by auditory stimuli. The same stimulus can cause different ERPs depending, for example, on whether the people in a test setup pay attention to a particular sound or have been instructed to ignore it. The neuroscientists then use a headpiece that looks like a swimming cap and has up to 96 electrodes attached to it to measure what happens in the brain.

Bleichner’s PhD student Arnd Meiser recently demonstrated the validity of working with ear-only EEGs in an experiment that, despite being labbased, provides a number of key findings for future studies in everyday life.

Dispensing with measuring points is supposed to make EEG measurements more practical in everyday life

In this experiment 20 test persons completed a number of hearing tests. They were wearing regular EEG caps with 96 measuring points so that the researchers could measure the typical potentials generated during assigned tasks. The scientists then compared the results with those that had been recorded using only electrodes located directly around the ears.

What they found was that, on aver-age, the ear electrodes registered a similar level of intensity in audio-specific brain activity to conventional EEGs. However – as Meiser and Bleicher learned for their future research – there is no single way of placing the electrodes which is equally suited to all experiments. Instead they need to be fitted individually for each test person, and their placement needs to be adapted to the specific ERPs that the researchers want to observe.

What triggers noise in individuals?

Individuality underpins all Bleichner’s research. He is not interested in how noise affects people in general. “The link between chronic noise pollution and cardiovascular or other health issues is very well known,” he says. The neuropsychologist is much more interested in how noise affects individuals. What do the brainwaves look like in a person who feels stressed by an everyday sound? And how do they differ from those of a person who might be able to block out Bleichner’s pen-clicking, for example?

To better conduct research in everyday situations, Bleichner’s mid-term goal is to record the brain activity of test subjects over extended periods of time, like in a long-term ECG. The researchers have developed a special app for this purpose that registers changes in the audio environment without recording the sounds themselves or even conversations.

“What happens in a test subject’s brain if you don’t tell them beforehand what or what not to focus on?” asks Bleichner. The possibility of measuring brainwaves over an extended period in everyday situations, he hopes, will open a door on phenomena that no one yet knows about. “I’m sure this technology will advance our work, because it will allow us to research aspects that we have never looked at properly before,” Bleichner says of the ear EEG.

Siedenburg fills research gap

Until recently, very little was known about the effects of hearing impairments on the perception of music. Musicologist Siedenburg has since shed some light on the situation. “When I came to Oldenburg as a postdoc researcher I wondered why no one had worked on this topic before,” Siedenburg recalls. His own doctoral thesis in Montréal on musical timbres still informs his research today.

In order to tailor music transmission to the needs of people with impaired hearing, he needs to find out what makes each tone in a piece of music distinctive. Timbre plays a critical role here. It is what allows us to distinguish between a violin and a piano, for example, even if both are playing the same tone at the same volume.

“If a piece of music were a stew, then the timbre would be the taste of the individual ingredients. Sometimes, as with a juicy goulash that has been stewing for a long time, a piece of music that aims to blend the sounds of all the instruments makes it very hard to identify the various individual elements. With other pieces of music, the different timbres are very easy to distinguish from one another, like in a vegetable soup where the individual ingredients all taste very different,” Siedenburg explains.

The timbre is one of the musical components that needs to be artificially modified to enable people with hearing difficulties to perceive it again. Here Siedenburg and his team let test subjects use the mixer themselves. In an ongoing experiment, they are invited to adjust the levels on a simplified mixing interface to optimize how the music sounds to them. The researchers hope this will allow them to pinpoint the preferences that all people with hearing impairments may have in common when listening to music, despite their many differences.

One thing that people with hearing impairments all share is a strong desire for improvement. This is something Siedenburg encounters on a daily basis. He frequently gets calls from people suffering from hearing loss who have read about his work and want to know how far the scientist has got in finding a solution.

The desire to enjoy music as one did in the past is great among people with age-related hearing loss.

However, it will take some time before the people who call him are able to benefit from Oldenburg research at a concert and hear music almost as well as they once did. But they can at least dream about how Music Listening 2.0 might work for them in the future – when their hearing aids are connected to their smartphones, which receive all the relevant signals of the music being played at the concert via a microphone installed on the stage, for example. And since the app will know everything about their individual hearing impairments, it will be able to mix the incoming music so that when it is played via the hearing aid it sounds just like it used to – or even better.

To move one step closer to this dream, the two hearing researchers Siedenburg and Bleichner will soon start working together. Although one may be mainly interested in noise and the other in music, there is one area in which their expertise is entirely complementary. “In the project with Martin Bleichner, we want to use EEG signals to decode, for example, whether someone is following the bass line or the vocals at a given moment,” Siedenburg says.

And this is why Bleichner will make an exception and actively seek out beautiful musical sounds for a change – so that in the future, even people for whom music is now just noise will be able to enjoy it once again.