Research topics

Research topics

Music and hearing health

Until recently, hearing impairment has been a blind spot of the map of music perception research. We aim to provide a conceptual and empirical groundwork that may allow an optimization of hearing aids to music. This involves a host of questions: How do listeners parse and organize complex musical scenes? How is music listening affected by hearing loss? Hearing aids are currently optimized for speech -- how can we improve music listening with hearing aids?

Collaborators:

Alinka Greasley (Leeds University), Daniel Müllensiefen (Goldsmiths, University of London), Lorenzo Picinali (Imperial College London), Kirsten Wagener (Hörzentrum Oldenburg), Volker Hohmann (Uni Oldenburg), Steven van de Par (Uni Oldenburg), Gunter Kreutz (Uni Oldenburg)

Key Publications:

K. Siedenburg, K. Goldmann, S. van de Par (2021). Tracking instruments in Bach’s The Art of the Fugue: Timbral heterogeneity differentially affects younger normal-hearing listeners and older hearing-aid users. Frontiers in Psychology, 12(608684):doi: 10.3389/fpsyg.2021.608684.

K. Siedenburg, S. Röttges, K. C. Wagener, V. Hohmann (in press). Can you hear out the melody? Testing musical scene perception of young hearing-impaired and older normal-hearing listeners. Trends in Hearing, 24, 1-15, doi: 10.1177/2331216520945826

Timbre & pitch perception

What is timbre and what does it do in music and speech? How do pitch and timbral brightness perception differ? What are the acoustic and cognitive factors affecting these auditory attributes? By gaining a better understanding of these questions, we seek to improve our general understanding of auditory perception.

Collaborators:

Stephen McAdams (McGill University), Charalampos Saitis (Queen Mary University of London), Daniel Pressnitzer (Ecole Normale Supérieure, Paris), Henning Schepker (Starkey Hearing), Christoph Reuter (University of Vienna).

Key Publications:

Siedenburg, K., Graves, J., and Pressnitzer, D. (2023). A unitary model of auditory frequency change perception. PLOS Computational Biology, 19(1):1–30. https://doi.org/10.1371/journal.pcbi.1010307

K. Siedenburg, F. M. Barg, H. Schepker (2021). Adaptative auditory brightness perception. Scientific Reports, 11(21456), doi.org/10.1038/s41598-021-00707-7

Siedenburg, K., Saitis, C., McAdams, S., Popper, A. N., and Fay, R. R. (2019). Timbre: Acoustics, Perception, and Cognition. Springer Handbook of Auditory Research. Springer Nature, Heidelberg, Germany.

Acoustical modeling

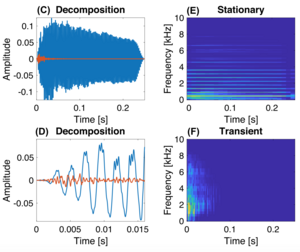

We develop models of acoustical sounds that shed light on how acoustic information is exploited by the human auditory system. Examples include transient extraction algorithm that helped to more detailedly isolate and study the role of transients and onsets for instrument identification. [Sound examples]

Collaborators:

Simon Doclo (Uni Oldenburg), Marc René Schädler (Uni Oldenburg)

Key Publications:

K. Siedenburg, S. Jacobsen, C. Reuter (2021). Spectral envelope position and shape in sustained instrument sounds. The Journal of the Acoustical Society of America, 149(6), 3715–3727, doi: 10.1121/10.0005088.

K. Siedenburg, M. R. Schädler, D. Hülsmeier (2019). Modeling the onset advantage in musical instrument recognition. The Journal of the Acoustical Society of America, 146(6), EL523–EL529

Siedenburg, K. and Doclo, S. (2017). Iterative structured shrinkage algorithms for stationary/transient audio separation. In Proc. of the 20th Int. Conf. on Digital Audio Effects (DAFx- 20), Edinburgh, Sep 5–8. [Best Paper Award]