Technology

Various technical components are used in the project group. These range from well-known virtualisations with Docker to specialist simulation tools such as CARLA.

ROS, ADORe and CARLA are used specifically in the project group.

- ROS stands for Robot Operating System. It is an open source platform that is specially designed for the development of robot software.

- CARLA is an open source simulator platform for autonomous vehicle research and development.

- ADORe (Autonomous Driving on Real Roads) is a research project of the DLR (German Aerospace Centre) that focuses on the development of autonomous driving capabilities for vehicles in real road traffic.

A suitable architecture is necessary to achieve the goals and requirements developed. By using ADORe, it is necessary to integrate the solution developed by the project group into an existing system.

ROS - Robot Operating System

ROS is an open-source operating system for robots that connects different components simply and efficiently. ROS is based on a service-oriented architecture in which different processes, known as nodes, interact with each other via messages.

This is particularly important for robots, as they usually consist of a large number of different sensors, actuators, displays and other components that need to work together efficiently. One of the main goals of ROS is the reusability of code in research and development. The aim is to define a standard so that not every sensor requires individual code. There are currently thirteen different ROS distributions, which are divided into two different ROS versions, ROS1 and ROS2.

ROS1 is used in the project group, as this version is used by ADORe.

CARLA - Simulation tool

CARLA is an open-source driving simulator with a focus on autonomous driving, which has been under development since 2017 (current version: 0.9.14 - as of Oct 2023). CARLA offers a realistic platform for testing and development in the field of autonomous driving.

The driving simulator is based on the Unreal Engine 4, which offers a realistic physics simulation.

CARLA has already established itself in many projects in industry and research, which is due to the open source status of the software and the well-documented Python API.

This API enables simple communication and control of the simulation.

CARLA version 0.9.13 is used within the project group, as ADORe requires this version.

CARLA provides eight different maps in 0.9.13, which represent motorway-like roads (American highway), country roads and urban traffic in different complexities.

ADORe - Toolkit

ADORe is a software toolkit for decision-making and the planning of trajectories for the automation of vehicles. Specifically, ADORe plans trajectories and makes decisions in road traffic on this basis. These decisions are output by ADORe in the form of control instructions. In a demonstration, the DLR Institute of Transportation Systems used ADORe to drive a route through urban Braunschweig. The vehicle is equipped with various sensors, including V2X, GNSS and seven LiDAR sensors. The vehicle can be viewed from a bird's eye view using a display in the vehicle. This visualisation is based on an OpenDRIVE map. The question arises as to how the sensor technology used can be used in conjunction with the ADORe toolkit to enable the realisation of an automated vehicle.

This is because in order to automate a vehicle, as in the demonstration by the DLR Institute of Transportation Systems, a further SENSE component based on sensor technology is required in addition to ADORe.

Rough structure

The software solution is integrated between several existing systems. The architecture currently comprises ADORe, adore_if_carla and the CARLA-ROS-Bridge. The ACDC-Main software solution to be developed will be integrated into this architecture. The aim is to implement a perception according to the requirements in order to replace the perception of adore_if_carla to ADORe.

The figure shows all the components used and how they communicate with each other. All communication in the ROS block takes place using ROS messages. ACDC-Main obtains data from the CARLA-ROS-Bridge and forwards this data to the ADORe perception API. A complete replacement of adore_if_carla by ACDC-Main is not possible, as the return path from ADORe (vehicle control) also takes place via adore_if_carla. Therefore, only the communication path (indicated by the red cross) between these two components must be removed.

Sensor setup of the simulation vehicle

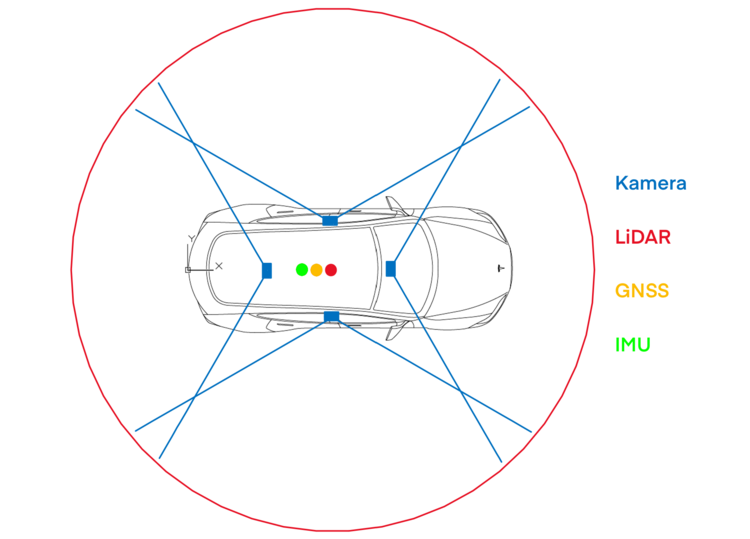

The sensors and their positions are of crucial importance, as this influences the overall perception (and therefore also the results). In order not to use random positions and sensors, the sensor setup from the simulation is based on real-life vehicles. For the positioning and specifications of the cameras to be used, the setup is based on the Model3 from Tesla. Within the project, this model was chosen because it is also available as a vehicle in CARLA and the camera positions are known. Tesla uses the IMX490 sensor from Sony 1 in hardware version 4.0, but the number of cameras is reduced to four in order to minimise complexity. This decision also entails a change in the FOV. In contrast to Telsa, the FOV of all cameras is set at 120 degrees. In addition to the cameras, the sensor setup includes a LiDAR sensor. The position and specification of the sensor are based on the FAScar. The FAScar is a research platform developed by DLR, which uses a 360 degree LiDAR sensor on the roof of the vehicle.

Four cameras are attached to the ego vehicle: a front camera in the rear-view mirror mount, one camera each on the left and right B-pillars and a camera in the centre of the rear window. The positions of the front, left and right cameras exactly mirror those of the Tesla Model 3. The rear camera has been moved slightly towards the centre to reduce the blind spot. The Ego vehicle also has a LiDAR sensor, which is positioned in the centre of the roof. In addition to the cameras and the LiDAR sensor, the car also has a GNSS and IMU sensor. Both sensors are installed in the centre of the car.

| Sensor | Quantity | Position | Position Specification |

|---|---|---|---|

| IMU | 1 | Centre of the car | Model: PixHawk 2.1 |

| GNSS | 1 | Centre in car | Model: Trimble |

| Camera | 4 | Front camera: Rear-view mirror attachment | Model: Sony IMX490 FOV: 120° Resolution: 2880 x 1860 px Frame rate: 20.8 FPS |

| LiDAR | 1 | Centre on the roof | Model: Velodyn Range: 100m |